Hello! I’m not planning on wading into the Topical like this very often, but I think the particular fanciful breathlessness with which some quarters have received recent developments in artificial intelligence is a nice opportunity to articulate some of the ways I am trying to think about narrative art in this newsletter. Should be a one-off thing. Thanks again for reading (even just this far)!

There are a few silly ways to interpret science fiction stories. One is to reduce them to a series of predictions. It’s interesting when a writer is able to predict flat-screen televisions, or blogging, or whatever, but this mode of interpretation risks reducing works of art to check-boxes: Star Trek may have been wrong about the possibility of warp drive, but they were right that people would use tablets, so it was 50% good. These kinds of predictions are fun, but I think they’re ultimately peripheral.

Another, more perniciously silly way to interpret SF stories is by thinking (or by acting in such a way that it looks like you think) that the brutal stuff in them is actually cool. Jeff Bezos’s mystifying love for the Expanse series is an example of this I think about sometimes. One of the nastiest members of our current ruling class (to whom we will return) financially underwrote the televisual adaptation of a media franchise largely about how miserable class exploitation is. Or here is an article on “Entrepreneur.com” in which the film Minority Report (based on a Philip K. Dick story) about the dire consequences of “predictive policing,” is reduced to a prescient depiction of swiping on a phone with your finger. (In the fantasy register, Peter Thiel called his company “Palantir.”)

A less-obvious-but-still-silly way is to interpret these stories is as one-to-one lessons about reality. For example: in James Tiptree’s marvelous “And I Awoke and Found Me Here on the Cold Hill’s Side,” humans have discovered aliens who are incredibly hot, but these aliens think human beings are not hot at all. It’s a story about a lot of things: that there is nothing guaranteeing that what we discover about the universe will grant us better lives; that technological development can inspire desires in us that have the potential to destroy us; that unrequited love feels like some kind of cosmic nightmare—it’s about all of these, but these interpretations don’t exhaust the story, because the story is actually, literally, about a guy who falls in love with the aliens even though they will never want to fuck him. There is a lot to be learned from this story, a lot that it gives us to think about—but I do not personally think that the best takeaway from “And I Awoke and Found Me Here on the Cold Hill’s Side” is that we should cancel SETI because we might discover aliens that don’t want to fuck us.

This is all just to say that science fiction exists at an interesting interpretive crossroads. On the one hand, it’s (in my opinion) at its best when it can be taken literally, as if the world it’s describing is real, and not merely as an allegory for ordinary reality. But one of the least useful things I think you can do with a science fiction story is drag its literal sense into the real world in the ways I’ve described.1

I don’t think this is just a question for speculative fiction. I’ve participated in many conversations about, for example, whether a character’s actions in a work of narrative art were ethical. Many of these conversations have been rich, interesting, productive, etc.! But they have also, in my experience, frequently elided the fact that the ethical situations set up by writers are inevitably reductive mock-ups of real life, artificially constrained by all sorts of things. This is, as they say, a feature, not a bug—Samuel R. Delany (making here the second of probably many appearances in this newsletter) says somewhere that one of the hopes undergirding the novel as a form is the hope that artfully simplifying reality can, in fact, clarify it, and I believe it can. But I’ve seen conversations of this sort get frustrating and thorny enough times to recognize that it’s pretty easy to let a writer’s decisions back us into a corner.

(Almost too obvious an example of this, the most obvious I can think of, is the wretched “trolley problem.” I am much less interested in deciding who to kill with my runaway train than in figuring out a) how the situation got so dire and b) why I, of all people, am behind the controls.)

This is all obvious stuff, but I’ve found it fruitful, personally, to think it through. I guess I’m most interested in the concept of defamiliarization—that art estranges us from reality so that we can return to reality with a new understanding of it. But in order to do this, we have to return to reality.

More like artificial, uh, unintelligence

I have always had a soft spot for the science-fictional conceit of artificial intelligence. The Asimov fix-up I, Robot made an enormous impact on me as a kid. The icy unreality of Do Androids Dream of Electric Sheep?, the hellish vision of “I Have No Mouth, and I Must Scream,” the weird phenomenological drama of Ann Leckie’s Imperial Radch trilogy—these are cool conceits, because they use AI to tell an interesting story.

At the risk of stating the obvious, I believe that the story they by and large tell is not the story of what we are currently calling AI.

Many of these stories explore the implications of AI personhood. These stories tend to go a certain way: an artificial intelligence is not recognized as a sentient, feeling being, when to the reader/viewer it is obvious that the AI is both of these things. (Perhaps the best example of this is the classic Star Trek: The Next Generation episode “The Measure of a Man,” in which this question as it pertains to the android character Data is put to a literal trial.)

I think that the weird moral convulsion occasioned by recent AI surrounding the ethics of computer sentience is a bit misguided. I don’t mean to say that AI in general isn’t a big deal. I am not an expert on it; as best as I can tell, it is a remarkable achievement in the field of data science, with wide-ranging implications. And it is certainly not to say that the ethics of AI aren’t worth taking seriously: we really do need to think through the ethical implications of artificial intelligence technology, much as we need to think through the ethical implications of all technology. This is a vital and important task and it is highly disturbing when companies abandon ethical questions in favor of maximizing short-term gains (as they always do).

But there is one particular story which many people seem to be telling themselves about artificial intelligence: namely, that we are either on the cusp of inventing a new form of sentience or have already done so. This story seems to me deeply influenced by the science fiction of the twentieth and twenty-first centuries, and I do not think it is the right one.

(This letter should, but does not, include an account of why I don’t think that what we call AI right now is anywhere close to “sentience.” If you’re looking for a very sharp take on it all which says it better than I could and with which I completely agree, check out this Ted Chiang article; it’s a good account of how to think about AI in a way that doesn’t succumb to the relentless desire to interpret language as meaningful.)

Why are people freaking out about this? There are a lot of reasons, but two in particular come to mind: a truly astonishing branding coup on the part of those responsible for marketing technologies like Large Language Models, and a naive interpretation of stories about AI. Why is it so seductive to take these stories literally? I’m going to start by giving a (very rough) account of a few reasons why we’re primed to leap to the conclusion that a computer can be conscious. If that sounds boring to you, feel free to skip down to the next section, where I will discuss the movie Blade Runner.

You can’t build an animal out of stuff

If you’ll forgive a harrowing oversimplification: at a certain point (frequently attributed to the work of, among others, Robert Boyle and Francis Bacon), early modern thinkers began to interpret the world in terms of a machine. (By “machine” I mean a particular combination of discrete, reproducible parts which use power to perform a specific, predetermined task.) This was a methodological assumption—not necessarily a metaphysical one. It was useful to think of nature as a machine (a “clockwork universe”) in order to perform experiments, make observations, and confirm or disconfirm hypothetical explanations—but this didn’t necessarily imply that the universe was, in the final assessment, a machine.2

As machines after the industrial revolution become more and more complicated, it becomes more and more tempting to believe that nature really is just a machine—which is to say (for those who don’t believe in a supernatural soul distinct from the body) that the human being is really just a machine. Eventually, of course, along comes the modern computer, which is a machine capable of processing unprecedentedly abstract inputs. Hey, people think, if people are natural, and nature is a machine, then people are machines; and if people deal with complex abstractions in a particular way, and a computing machine can deal with complex abstractions in a particular way, maybe people are just computers. It’s a perfectly logical thought process—if you start from assumptions I, at least, regard as suspect.

If this was not obvious, I will put my cards on the table: I do not think the mind is a computer. The human being is an organism, which means it works sort of like a machine in some ways, but not in others—but even if you do believe that animals are fancy machines, there’s not real reason to believe that the computer is an equally and identically fancy machine. For one, computers are binaristic, comprised ultimately of a titanic quantity of on-and-off switches in various states. Neuronal states are not binaristic; they are “analog.”

The comparison breaks down on other orders, too. At a higher level of abstraction, computers function by means of algorithms: mathematical functions that take quantitative inputs and transform them into quantitative outputs. I don’t think this is how the mind works, either. There is a robust philosophical debate around this question, but intuitively it seems to me to reduce down to the question of whether all experience is reducible to the same kind of thing. Everything you do with a computer, no matter how complex, is reducible to quantities, which are themselves reducible down to the state of being “on” or “off.” But there are just too many kinds of experience. Fresh air, something tasting bad, having a friend, a catchy song, grief, sexual desire, understanding a thought—these experiences are interconnected, and they all exist within the same body; but beyond that, I’m not sure what the common denominator would be. Perhaps a computer could represent them to a greater or lesser degree of accuracy, but representation isn’t replication. (Not coincidentally, in my opinion, this universal commensurability—the idea that everything is reducible to one kind of thing—also underpins free-market ideologies.)

People have always compared internal reality to external reality—there’s just nothing else to think with. In the past, people compared the mind to things like “the ocean” and “the wind.” These days, they compare the mind to a computer. But the idea that the mind is a computer is exactly as much of a metaphor as the ancient idea that the mind is an ocean. There is stuff that’s useful about thinking this way: insights we can have that we could not have otherwise had. But like all metaphors, it’s incomplete. And it’s more of a problem when thinking about AI, because the idea that the mind is a machine means, of course, that a machine could (straightforwardly and soon) be a mind.

Movie time

I’ve argued that the inheritors of whatever particular scientific-philosophical tradition it is I’m talking about, if I’m talking about something real at all, are primed to receive the argument that a computer can be a mind. Let’s turn to a cool movie about AI that we all like watching: Blade Runner.

Taken literally, Blade Runner (based, of course, on the Philip K. Dick novel Do Androids Dream of Electric Sheep?, which is a much crazier, and therefore better, title) is making the same argument as those who assert that AI might be sentient. Are replicants algorithms which spit out information, or it is like something to be one? The “tears in rain” speech is so moving in part because it’s such a powerful affirmative answer. “I’ve seen things you people wouldn’t believe,” Roy says. It’s a brilliant formulation. The opacity of replicant psychology is not evidence that replicants are inferior to humans. What is valuable about replicant experience is its unfathomability, its difference from human experience. The speech turns anti-replicant reasoning on its head: humans think that their subjectivity is unique, but replicant subjectivity is so unique that humans (“you people,” a classic bigoted address) can’t even understand it. The opacity of replicant psychology is evidence not of its inferiority but its unfathomability. It is “like something” to be a replicant, and it’s extraordinarily special.

So within the universe of Blade Runner, I think it’s safe to say that it’s probably bad to kill the robots. I would not feel good about decommissioning a replicant. (The uncertainty at the heart of the film is, of course, whether or not our hero, the replicant-hunter Decker, is actually himself a replicant.) Still, I think it’s naive to say that the moral of the story is, if something passes the Turing Test, it has a soul and we shouldn’t kill it. (I’d argue it’s a mistake of the kind I outlined earlier with reference to “And I Woke And Found Me Here On the Cold Hill’s Side.”) There is no moral of Blade Runner, because there’s not a moral of most stories, but if I had to paraphrase its subject in moral terms, I’d say it’s about the dangerous fact that technology destabilizes our intuitions about selfhood and personality. The question of whether or not technology displaces the self beyond recognition goes back at least (sorry) to Plato’s Phaedrus, in which Socrates argues that writing is a technology which might replace rather than supplement memory. It’s a really old question, and one of the reasons I like Blade Runner is that I think it phrases that question well.

This is, in my opinion, a richer interpretive angle, both for taking Blade Runner seriously as a work of narrative art and for understanding how it might touch the real world. (The pithy way to phrase my understanding of the speculative conceit: the best conceits don’t allegorize the world, but they do touch it.)

On the material conditions of cultural reception; or, why being rich makes you stupid

It’s famously difficult to understand the relationship between culture and economic conditions. I can’t demonstrate that there is a causal connection between the prevalence of a fictional trope and what I perceive (perhaps wrongly) as a cultural intuition.

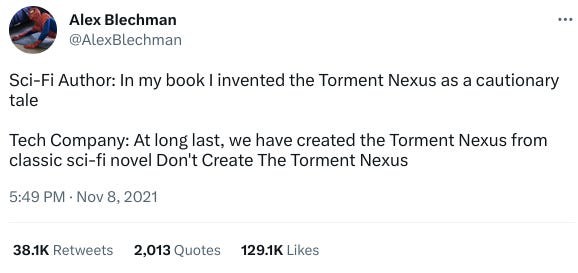

But I do think there’s something here. Blake Lemoine, the Google engineer who quit because he became convinced their most recent model of chatbot was potentially sentient, writes in terms of Asimov’s famous, paradigm-setting Three Laws of Robotics:

These AI engines are incredibly good at manipulating people. Certain views of mine have changed as a result of conversations with LaMDA. I'd had a negative opinion of Asimov's laws of robotics being used to control AI for most of my life, and LaMDA successfully persuaded me to change my opinion. This is something that many humans have tried to argue me out of, and have failed, where this system succeeded.

To be clear, I don’t think that science fiction authorizes, let alone causes, the bad behavior of panicky AI engineers, to say nothing of our oligarchs. Still, it’s a disturbing fact about the genre’s reception that, among others, some of the most innovatively vicious members of the ruling-class have explicitly cited classics of the science-fictional genre as influences. What’s going on there?

To turn to our robot-fearing overlords, briefly: I don’t think it’s all that deep. Attempts to paint Elon Musk, for example, as some kind of intellectual are self-evidently ludicrous. He’s a robber baron and a con artist. Perhaps more interesting is the case of the man I consider perhaps the ultimate motherfucker of our times, Jeff Bezos. From Franklin Foer’s 2019 profile:

At the heart of this faith is a text Bezos read as a teen. In 1976, a Princeton physicist named Gerard K. O’Neill wrote a populist case for moving into space called The High Frontier, a book beloved by sci-fi geeks, NASA functionaries, and aging hippies. As a Princeton student, Bezos attended O’Neill seminars and ran the campus chapter of Students for the Exploration and Development of Space. Through Blue Origin, Bezos is developing detailed plans for realizing O’Neill’s vision.

The professor imagined colonies housed in miles-long cylindrical tubes floating between Earth and the moon. The tubes would sustain a simulacrum of life back on the mother planet, with soil, oxygenated air, free-flying birds, and “beaches lapped by waves.” When Bezos describes these colonies—and presents artists’ renderings of them—he sounds almost rapturous. “This is Maui on its best day, all year long. No rain, no storms, no earthquakes.” Since the colonies would allow the human population to grow without any earthly constraints, the species would flourish like never before: “We can have a trillion humans in the solar system, which means we’d have a thousand Mozarts and a thousand Einsteins. This would be an incredible civilization.”

Beneath all the grand rhetoric about the fate of humanity, beneath the pontificating and the pseudo-philanthropic posturing, I really do believe that Bezos wants to go to space because he thinks going to space would be cool. I think that’s all it is. After a paragraph summarizing his lifelong Star Trek fandom—by high school, he was already completely devoted to going to space—Foer writes that, “Most mortals eventually jettison teenage dreams, but Bezos remains passionately committed to his, even as he has come to control more and more of the here and now.”

The capitalist mode of production is, among many many other things, a convoluter of desire. The violence of our world takes basic human wants and distorts them beyond recognition. The hope that one’s loved ones will be safe becomes doomsday prepping; the need to love and be loved becomes the marriage contract; the desire to know other people and be known by them becomes a branding exercise. And it goes all the way down: the desire to look attractive is rendered contingent on sweatshops and animal experimentation, the desire to eat well is wrapped up in impossibly destructive farming practices, the desire to live somewhere stable is only achievable in terms of property, which is to say on the foundation of a vast, centuries-old scheme of unbelievably violent colonial expropriation. These are all completely reasonable things to want, but to get them requires, for many of us, some degree of violence.

It’s completely understandable to believe that going to space would be cool. I think going to space would be cool! I love Star Trek! It’s exciting to imagine an egalitarian and collective effort to explore outer space. But to a person like Bezos, for whom the cosmopolitan communitarianism undergirding the Star Trek universe is unthinkable, the next best thing is just doing it yourself. The fact that he is unruffled by the millions of people whose lives his corporation crushes has nothing to do with science fiction, as such—nobody in the ruling class cares about the millions of people they exploit. His readerly insensitivity to the class-conscious dimension of the stories he likes (he’s an Iain Banks fan!) is the same insensitivity that allows him to overlook the blood on which his empire is founded.

The stories we tell ourselves about AI are, of course, extrapolations from the world we live in, and the world we live in makes it hard for a lot of people to imagine a world not founded on terror and violence. The AI horror stories we hear from tech magnates and “thought leaders”—that a computer will decide to start an immensely destructive war, or torture those disloyal to it—are things that are already happening, just not by computers. I’m not the first to point out that these are textbook projections: world-historically materially comfortable citizens of the United States are suddenly afraid that they might be subject to horrifying and sudden violence from a remote and impersonal power, or might be tortured by a shadowy force demanding something deranged and irrational? The fear underlying Sentient AI Anxiety—that we might accidentally wind up creating a system which causes unfathomable suffering to vast quantities of beings not everyone recognizes has sentient—has, well, more than a few real historical analogues. It is infuriating to watch the tech sector of the ruling class piss their thousand-dollar cargo pants over something like this when their very existence is predicated on unfathomable quantities of real, actually-existing, present-tense violence.

All of this is just to say that our capacity to interpret stories about technology is only as extensive as our capacity to imagine the world. Technology is precisely what we make of it; the problem is, here as so often, who’s included in that “we.” The task is to build a society where widespread human exploitation is unthinkable, where our desires can be realized with a minimum of violence: to make the world safe for our stories about it.

As soon as I wrote this, I started thinking about how it’s completely wrong. I guess I’ll introduce a methodological principle here: if a generalization I throw out there is useful, use it; if not, you share the right that I am here reserving—namely, to throw it in the damn trash can.

But more specifically: there are science fictional works meant to be taken more literally than others. Kim Stanley Robinson’s recent output comes to mind. As his fictional worlds grow closer and closer to our own, they are meant more and more to provide a kind of practical affective-theoretical toolkit for dealing with climate catastrophe. But I’d venture to say that most speculative fiction, for better and for worse, has a somewhat looser connection to contemporary reality than this; minimally, at least, I’d say this is a useful way to think about some SF.

As Steven Shapin and Simon Schaffer argue in Leviathan and the Air-Pump, the turn to the mechanistic philosophy in fact occasioned, over and against the Hobbesian understanding that reality could be deduced from first principles, a novel humility about the possibility of knowing things like whether the universe was really just a machine: “In the root metaphor of the mechanical philosophy, nature was like a clock: man could be certain of the hour shown by its hands, of natural effects, but the mechanism by which those effects were really produced, the clockwork, might be various” (24).

good article, i think especially good on 1) the manner in which capitalism fucks with desire and 2) the projection of those in the imperial core of the violence that subtends and sustains their lifestyles onto Hal 9000 or w/e. i'd like to read more about capitalism as "convoluter of desire." any recs?